NVIDIA’s Spectrum-XGS Ethernet Technology Aims to Revolutionize Giga-Scale AI Data Centers

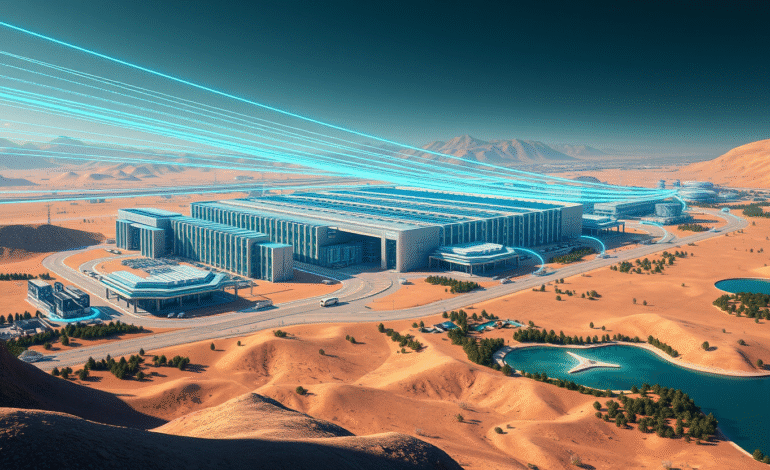

In the rapidly evolving realm of artificial intelligence (AI), a pressing issue has surfaced: as AI data centers reach their capacity limits, they face the costly predicament of either expanding facilities or finding solutions to seamlessly integrate multiple locations. NVIDIA’s latest innovation, Spectrum-XGS Ethernet technology, aims to alleviate this challenge by connecting AI data centers across vast distances, transforming them into what NVIDia refers to as “giga-scale AI super-factories.”

Unveiled prior to Hot Chips 2025, this networking advancement signifies a response from NVIDIA to an escalating issue that is forcing the AI sector to reconsider distribution methods for computational power.

As AI models become increasingly complex and resource-intensive, they demand substantial computational resources that often exceed the capabilities of a single facility. Traditional AI data centers grapple with limitations in power capacity, physical space, and cooling capabilities.

When additional processing power is required, companies typically resort to constructing new facilities; however, coordinating work between separate locations has been problematic due to networking constraints. The issue revolves around standard Ethernet infrastructure, which experiences high latency, unpredictable performance fluctuations (known as “jitter”), and inconsistent data transfer speeds when connecting distant locations.

These issues impede the efficient distribution of complex calculations across multiple sites within AI systems.

Spectrum-XGS Ethernet introduces what NVIDIA calls a “scale-across” capability—a third approach to AI computing that complements existing strategies such as “scale-up” (increasing the power of individual processors) and “scale-out” (adding more processors within the same location).

This technology integrates into NVIDIA’s existing Spectrum-X Ethernet platform, incorporating several key innovations:

According to NVIDIA’s announcement, these improvements can boost the performance of the NVIDIA Collective Communications Library by nearly double, a library responsible for communication between multiple graphics processing units (GPUs) and computing nodes.

Cloud infrastructure company CoreWeave, renowned for GPU-accelerated computing, plans to be among the early adopters of Spectrum-XGS Ethernet.

“With NVIDIA Spectrum-XGS, we can interconnect our data centers into a single, unified supercomputer, offering our clients access to giga-scale AI capable of accelerating breakthroughs across every industry,” stated Peter Salanki, CoreWeave’s cofounder and chief technology officer.

This implementation will serve as a practical test case for whether the technology can deliver on its promises under real-world conditions.

The announcement follows a series of networking-focused releases from NVIDIA, including the original Spectrum-X platform and Quantum-X silicon photonics switches. This pattern suggests that NVIDIA recognizes networking infrastructure as a critical bottleneck in AI development.

“The AI industrial revolution is underway, and giga-scale AI factories are the essential infrastructure,” said Jensen Huang, NVIDIA’s founder and CEO, in the press release. While Huang’s characterization reflects NVIDIA’s marketing perspective, the underlying challenge he describes—the need for more computational capacity—is acknowledged across the AI industry.

The technology could potentially revolutionize how AI data centers are planned and operated. Instead of building massive single facilities that strain local power grids and real estate markets, companies might distribute their infrastructure across multiple smaller locations while maintaining performance levels.

However, several factors could influence Spectrum-XGS Ethernet’s practical effectiveness, including network performance across long distances, which is subject to physical limitations, and the quality of underlying internet infrastructure between locations. The technology’s success will depend on its ability to function within these constraints.

Furthermore, the complexity of managing distributed AI data centers extends beyond networking to include data synchronization, fault tolerance, and regulatory compliance across different jurisdictions—challenges that networking improvements alone cannot solve.

NVIDIA states that Spectrum-XGS Ethernet is “available now” as part of the Spectrum-X platform; however, pricing and specific deployment timelines have yet to be disclosed. The technology’s adoption rate will likely depend on cost-effectiveness compared to alternative approaches, such as building larger single-site facilities or using existing networking solutions.

In summary, if NVIDIA’s technology functions as promised, we could witness faster AI services, more powerful applications, and potentially lower costs as companies gain efficiency through distributed computing. However, if the technology fails to deliver in real-world conditions, AI companies will continue grappling with the costly choice between building ever-larger single facilities or accepting performance compromises.

CoreWeave’s upcoming deployment will serve as the first major test of whether connecting AI data centers across distances can truly work at scale. The results will likely determine whether other companies follow suit or stick with traditional approaches. For now, NVIDIA has presented an ambitious vision—but the AI industry is still waiting to see if the reality matches the promise.