AI’s Power Hungry Data Centers: The Rise of Intelligent, Adaptable Cooling Solutions

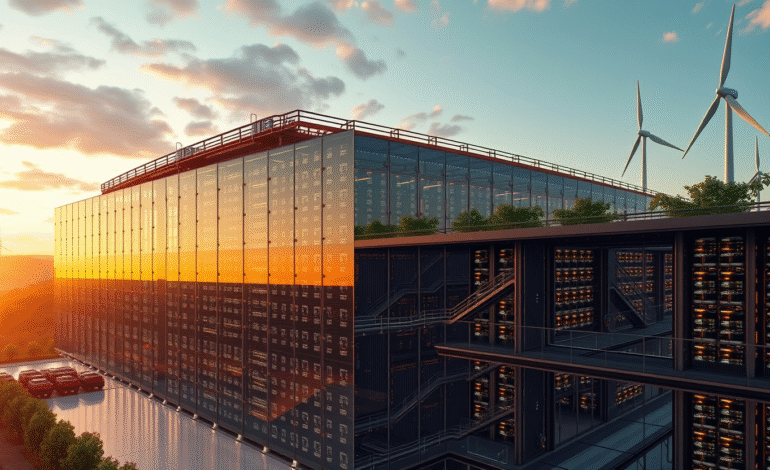

Data centers have transitioned from energy-intensive facilities to power-guzzling behemoths, fueled by the surge of artificial intelligence (AI). These technologically advanced hubs consume electricity at an unprecedented rate, with cooling systems working tirelessly to maintain optimal temperatures.

Tim Loake, Vice President of the Infrastructure Solutions Group at Dell, has witnessed this transformation firsthand after almost two decades within the company. Data centers have evolved from simple server warehouses into intricate operations where minute temperature variations can significantly impact quarterly performance.

Present-day AI workloads necessitate not just increased computing power but exponentially more, leading to substantial energy consumption. The training of large language models (LLMs) alone can consume megawatts of electricity, a figure that doesn’t even factor in the cooling systems required to prevent overheating.

Some of these installations draw power equivalent to small cities, posing challenges for utility providers and regulatory bodies alike. However, companies cannot merely increase energy supply as a solution due to mounting corporate sustainability targets aimed at reducing carbon emissions, and escalating energy costs eroding profit margins.

Concurrently, AI teams within these corporations are clamoring for even more computational power to develop the next generation of models. The increasing demand for computational resources in AI is pushing the boundaries of data center cooling technology.

AI is revolutionizing data center cooling by introducing intelligent, adaptable, and automated solutions. Traditional static cooling methods are being replaced with AI-driven systems capable of analyzing real-time conditions, including server workloads and external factors like temperature and humidity.